Training Anima LoRAs with sd-scripts on AMD Strix Halo

This guide walks you through setting up the tools and workflow for training a custom LoRA (Low-Rank Adaptation) using sd-scripts on AMD Strix Halo APUs. It focuses on training Anima models. For a similar guide on musubi-tuner, see Training Klein 9B/4B LoRAs with Musubi-Tuner or Training Z-Image LoRAs.

The goal of this guide is to help you set up the tools and environment for LoRA training. The actual training process requires experimentation to find settings that work best for your specific use case. These are the steps I followed to train a LoRA for Anima based on a specific anime style, with examples from my own training where applicable.

Hardware Requirements

This guide assumes a Strix Halo with 128GB of RAM for the default path. Refer to the Out of VRAM section if you run into problems.

Prerequisites

Make sure you have the following installed:

- uv - A fast Python package installer and manager

- git - Version control system

You can install uv and git on most Linux distributions:

# Arch Linux

sudo pacman -S uv git

# Ubuntu/Debian

sudo apt install uv gitInstallation

Download the sd-scripts wrapper script and copy it where you want. This is a small script I created to simplify the procedure. The script is easy to read, so if you are curious, have a look at what it does!

Now cd to the directory where the script is located. From there, you will need to:

# Make the script executable

chmod +x sd-scripts.sh

# Install sd-scripts

./sd-scripts.sh setupBy default this will install sd-scripts in your home directory. You can override the install directory:

export SD_SCRIPTS_INSTALL_DIR="/sd-scripts/installation/path"The script defaults to downloading dependencies for Strix Halo (gfx1151). This can also be overridden:

# You can check all available architectures here: https://rocm.nightlies.amd.com/v2-staging/

# The example below is for Strix Point

export GFX_NAME="gfx1150"All overrides must be performed before running the setup step.

Downloading Models

We need to download the Anima base model and its components. Anima uses a different architecture than Flux, requiring specific model files.

All Anima model files are available in the official HuggingFace repository of the model

Anima Model Components

- DIT Model: Download

diffusion_models/anima-preview2.safetensors - VAE: Download

vae/qwen_image_vae.safetensors - Qwen3 Model: Download

text_encoders/qwen_3_06b_base.safetensors

Note: The exact model files and their locations may vary. Check the official Anima repository on HuggingFace for the latest model releases.

After downloading, note the paths to your model files. You’ll need them in the next steps.

Project Creation

We will now create the LoRA project. Once again, we will rely on the script to create the initial directory structure and standard sd-scripts configuration for Anima.

# Set the model version to "anima"

export MODEL_VERSION="anima"

# Set the path to the DIT model (Anima diffusion transformer preview-2)

export DIT_MODEL="/path/to/diffusion_models/anima-preview2.safetensors"

# Set the path to the VAE (Qwen VAE)

export VAE_MODEL="/path/to/vae/qwen_image_vae.safetensors"

# Set the path to the Qwen3 model

export QWEN3_MODEL="/path/to/text_encoders/qwen_3_06b_base.safetensors"

# Set the path to the T5-XXL tokenizer (optional, needed only if you want to use a custom tokenizer)

export T5XXL_TOKENIZER="/path/to/t5xxl/tokenizer"

# Set the project name. A folder with this name will be created

export PROJECT_NAME="my-anima-lora"

# Create the project

./sd-scripts.sh createDataset Preparation

A good dataset is crucial for training a useful LoRA. The following are technical guidelines and rules of thumb to get you started. However, experimentation is key - different datasets and styles may require different approaches.

Adding Images

Place your training images in the dataset directory of your project. Each image should have:

- File format: PNG, JPG, or WEBP

- Resolution: Aim for 1024x1024. Using a single resolution for all images can reduce the number of batches, which is good

- Aspect ratio: Square images work best but other ratios will work too

As a rule of thumb, anywhere from 20-200 images will work. The quality of the images is more important than the number.

Adding Captions

For each image, create a corresponding text file with the same name but .txt extension:

dataset/

├── image1.jpg

├── image1.txt # Caption for image1.jpg

├── image2.png

├── image2.txt # Caption for image2.png

└── ...Caption rules of thumb:

- For styles: describing the scene but not the style works. I had good results with just empty captions.

- For characters: a trigger word + a short description seems to work well.

Editing the Dataset Config

In the project directory, you will find a dataset.toml file. While usable as-is, here is the explanation of some of its parameters:

resolution: Target resolution for trainingbatch_size: How many images to process at once (reduce if you run out of VRAM)enable_bucket: Allows different aspect ratios (keeps more detail)num_repeats: How many times to cycle through the dataset per epoch (higher = more training)

Creating Reference Prompts

Another notable file is called reference_prompts.txt. Reference prompts are used to generate sample images during training, so you can see how the LoRA is progressing.

Example with a single prompt:

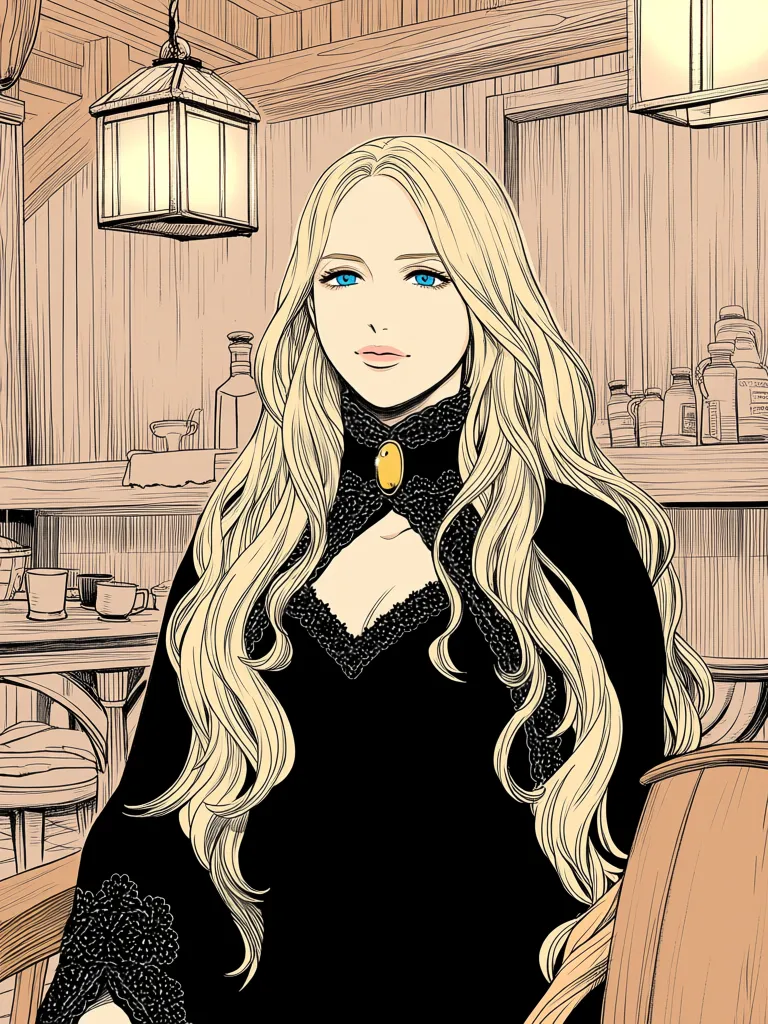

An illustration in @mikkoani style of a very young woman with fair skin and striking blue eyes, looking directly at the camera with a soft, serene expression. Her blonde hair is styled in an elegant updo, adorned with numerous small white flowers, possibly daisies, nestled throughout the curls. She wears a floral-patterned blouse with black, white, and gold flowers, a pearl earring in her right ear, and has a manicure with white nail polish. Her hands are gently cupped around her face, with her fingers lightly touching her cheeks. The background is a deep, dark blue, creating a dramatic contrast that highlights her features and the delicate details of her look. --w 1024 --h 1024 --d 42 --s 30Each line is a separate prompt that will be sampled during training. Adding as many as you need, but keep in mind that this will make the training session longer.

Training Configuration

The following explains the most relevant parameters from the training.toml file in your project directory:

network_dim: Dimension of the LoRA (8-16 is usually suitable for simple styles, 32-64 for more complex concepts or characters)network_alpha: Alpha value, typically half of network_dimlearning_rate: 1e-4 is a good starting point (adjust if the loss doesn’t decrease)max_train_epochs: How many training cycles (10-50, depending on dataset size)save_every_n_epochs: How often to save checkpointssave_state: Saves the training state with each checkpoint. This allows stopping and resuming training. It consumes more disk space and VRAMcompile: Enable torch.compile for faster training (default: true)sdpa: Use scaled dot product attention for better performance (default: true)

Important: All the settings mentioned above are starting defaults. There is no one-size-fits-all configuration. You will need to experiment with these values to find what works best for your specific dataset and goals. The values provided are based on my experience, but your results may vary significantly.

Running Training

# Run the training

./sd-scripts.sh trainNote: You are likely to see many warnings when running this command. They are harmless and can be ignored.

Resuming Training

If you have stopped the training or feel that the LoRA is undertrained even after finishing, you can resume training if you set save_state to true (which is the default) in training.toml. To resume, only run:

./sd-scripts.sh trainThis will automatically find the latest saved state and restart training from there.

Monitoring Training

During training, you’ll see:

- Loss values - Should decrease over time. If it stays flat or increases, your learning rate may be too high. However, do not rely too much on this value.

- Sample images - Generated every N epochs (or whatever you set) showing how the LoRA is learning

- Checkpoint files - Saved to the

outputdirectory of the project every N epochs (or whatever you set)

What to Look For

| Epoch | What to Check |

|---|---|

| 1-5 | Loss should start decreasing |

| 5-10 | Sample images should show the style emerging |

| 10-20 | Check for overfitting (samples look too much like training images) |

| 20+ | If loss is still decreasing, consider more epochs |

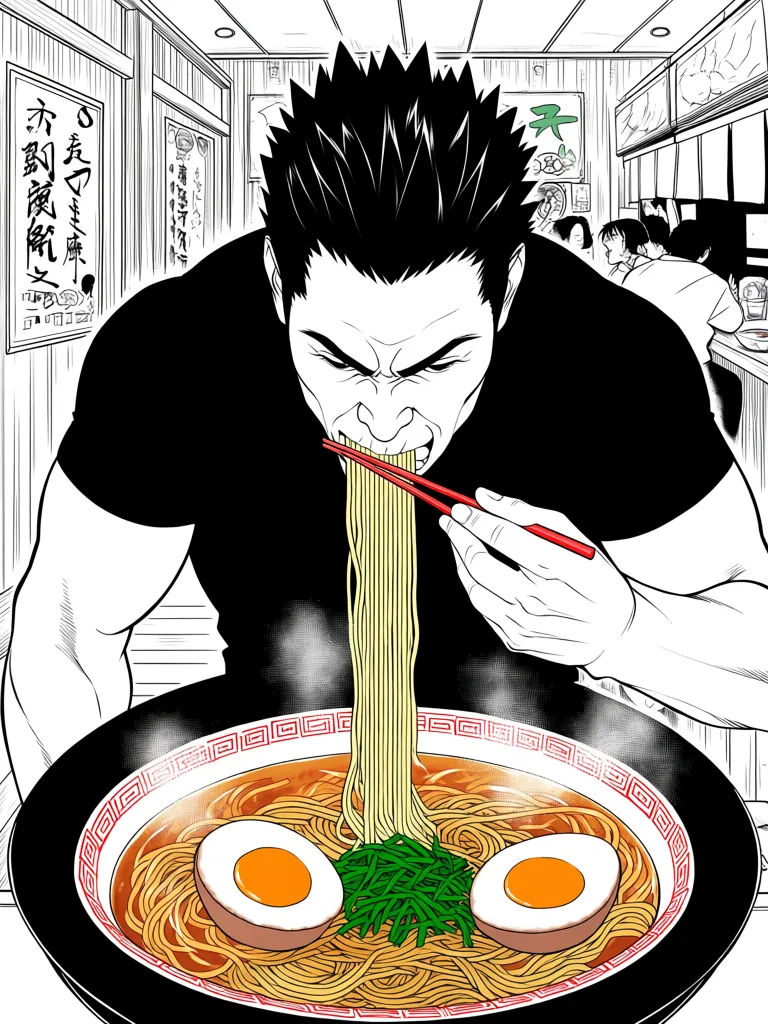

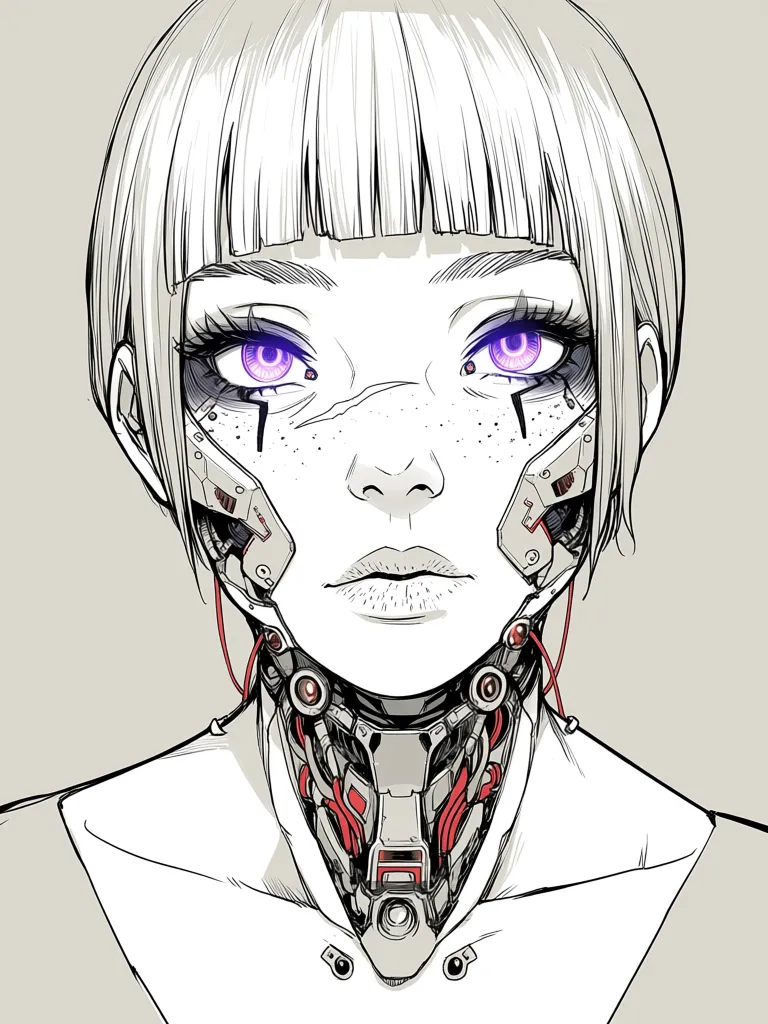

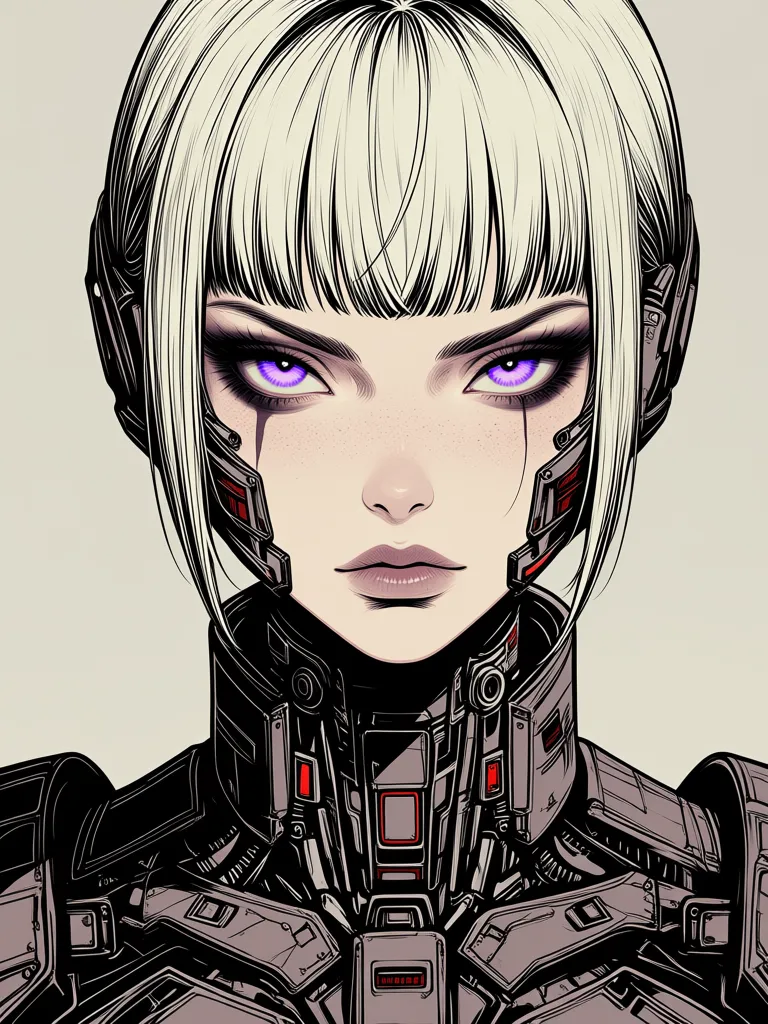

Here are some examples from my training:

| Epoch 0 | Epoch 6 | Epoch 12 | Epoch 20 | Epoch 30 |

|---|---|---|---|---|

|   |   |   |   |

| Start | Early learning | Mid-training | Almost there | Final |

I tested all most promising checkpoints using ComfyUI and went for the last one.

Using Your Trained LoRA

After training completes, you’ll have checkpoint files in the output directory of your project:

output/

├── my-anima-lora-000002.safetensors # Epoch 2 checkpoint

├── my-anima-lora-000004.safetensors # Epoch 4 checkpoint

├── my-anima-lora-000006.safetensors # Epoch 6 checkpoint

└── ...The final checkpoint won’t have a sequence number.

In ComfyUI

Important: Anima LoRAs require a conversion step for ComfyUI compatibility. This is done automatically for the last checkpoint after training, but you can also trigger it manually for other checkpoints.

-

Automatic conversion: After training completes, the final checkpoint is automatically converted and saved as

my-anima-lora_comfyui.safetensors -

Manual conversion: For any other checkpoint, run:

./sd-scripts.sh convert output/my-anima-lora-000016.safetensorsThis creates

my-anima-lora-000016_comfyui.safetensors -

Place the

_comfyui.safetensorsfile in your ComfyUImodels/loras/directory -

Add a

Load LoRAnode to your workflow -

Connect it to your Anima model nodes

-

Adjust the LoRA strength. Start with 1.0, but don’t be afraid to push it significantly higher or lower.

In other tools

Most tools that support Stable Diffusion LoRAs will work with Anima LoRAs. Look for a “Load LoRA” or similar node/module.

Results

Here are some example images generated using my LoRA. They are generated with the same prompt and seed using Anima.

Troubleshooting

Out of VRAM

If you get “out of memory” errors:

- Reduce

batch_sizeindataset.toml - Try

optimizer_type = "AdamW"intraining.toml(or use 8-bit optimizers if available) - Reduce

resolution(try 768x768) - Reduce

max_data_loader_n_workers(try 1) - Disable

compileintraining.tomlif it’s causing issues

Samples Look Bad

- Train for more epochs (style may need time to emerge)

- Check your dataset quality (images should be clear, captions should be good)

- Try a different

network_dim(higher for complex styles) - Adjust

learning_rateif the loss is not decreasing properly

Conclusion

You now have a complete workflow for setting up and training custom LoRAs with sd-scripts on AMD GPUs. Start with a small dataset (50-100 images) and experiment with different settings to find what works best for your use case.

For more information, check out:

- sd-scripts GitHub

- LoRA Theory and Practice

- My HuggingFace

- Training Klein 9B/4B LoRAs with Musubi-Tuner - For a similar guide on Flux training

- Training Z-Image LoRAs - For a similar guide on Z-Image training